‘Data quality’ has been a hot topic in the Atlas of Living Australia (ALA) for many years. In just about all the presentations I have ever given on the ALA, data quality comes up at question time.

A recent ZooKeys’ paper (Mesibov 2013) highlighted data quality issues in aggregated data sets in the ALA and GBIF. The admirable work by Bob was however enabled by the ALA’s exposure of integrated datasets. While we welcome Bob’s highlighting of data issues, the ALA and counterparts internationally have been working diligently to minimise such errors, but not as fast as the community may wish.

Herbarium or museum records, or even a single collector’s records, are all aggregations of records taken at different times and by different collectors. The flow of biological observations can go from observer to end user through multiple digital aggregators. Bob Mesibov is also a data aggregator in his analysis of the Australian millipedes. At any node in the data flow, errors can be detected, introduced or addressed.

Data should be published in secure locations where they can be preserved and improved in perpetuity and the ALA is a good example. We are moving beyond storage of data by individuals or institutions that do not have a strategy for enduring digital data integration, storage and access.

One of the most powerful outcomes of publishing digital data is that inherent legacy problems are revealed despite the concerted work of dedicated taxonomists over decades or longer. Exposing data provides the opportunity for the community to detect and correct errors. Indeed, much of the admirable work achieved by Mesibov (2013) was enabled by having data exposed beyond the originating institutions.

The ability to identify and correct data issues is also the responsibility of the whole community and not any single agent such as the ALA. There is the need to seamlessly and effectively integrate expert knowledge and automated processes so all amendments form part of a persistent digital knowledge about species. Talented and committed individuals can make enormous progress in error detection and correction (as seen in Mesibov, 2013) but how do we ensure that when a project like that on millipedes ceases, the data and all associated work are not lost? This implies standards in capturing and linking this information and maintaining the data with all amendments documented. To achieve this, the biodiversity research community needs to be motivated and empowered to work in a collaborative fashion.

Data quality is of the highest concern but data may not have to be 100% accurate to have utility in some projects. Quality issues affecting some users may be of secondary or no importance to others. For example, a locational inaccuracy of 20km on a record will likely not invalidate its use with regional or continental scale studies. Access to information on a type specimen is likely to be of value even if georeferences are incomplete. The term ‘fitness for use’ may therefore be more appropriate than ‘data quality’ in many circumstances.

This is not an excuse to ignore errors, but a recognition that effective use depends on knowledge about the data. The goal of the aggregators is to understand how much confidence is appropriate in each element of each record and to enable users to filter data based on these confidence measures. The philosophy of most of the aggregators is therefore to flag potential issues, correct what is obviously correctable and flag issues rather than hiding the associated record.

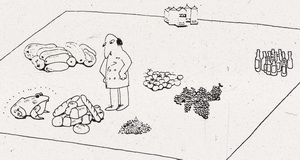

Some data quality issues can be detected without domain specific taxonomic expertise and some cannot. The correction of detected issues also may or may not require domain specific expertise. There are therefore four possible scenarios.

Specialist domain expertise is required to detect and correct many of the issues raised by Mesibov (2013). Agencies such as the ALA do not generally have this type of expertise. The ALA does however expose the data and provides infrastructure and processes that help to detect and to address data issues. An example of the quality controls undertaken by the ALA as can be seen here.

A more fundamental issue is that most biodiversity data today are managed and published through a wide range of heterogeneous databases and processes. Consistency is required for guaranteed, stable, persistent access to each data record and in establishing standardised approaches to registering and handling corrections. ‘Aggregators’ such as the ALA have a key role in addressing this challenge but ultimately it will depend on widespread changes in the culture of biodiversity data management.

Another component in the infrastructure supporting error detection and correction in the ALA is a sophisticated annotations service that uses crowd sourcing. Issues that are detected, with potential corrections are returned to the data provider. Note however that some data providers may not have the resources to address the issues or indeed may no longer exist.

See Belbin, et al. (2013) below for the full paper.

References

Belbin Lee, Daly Joanne, Hirsch Tim, Hobern Donald, LaSalle John (2013). A specialist’s audit of aggregated occurrence records: An ‘aggregator’s’ perspective. ZooKeys 305, 67-76. doi: 10.3897/zookeys.305.5438.

Chapman, AD (2005a). Principles and Methods of Data Cleaning – Primary Species and Species Occurrence Data, version 1.0. Report for the Global Biodiversity Information Facility, Copenhagen. 75p.

Chapman, AD (2005b). Principles of Data Quality, version 1.0. Report for the Global Biodiversity Information Facility, Copenhagen. 61p.

Costello MJ, Michener WK, Gahegan M, Zhang Z-Q, Bourne P, Chavan V (2012). Quality assurance and intellectual property rights in advancing biodiversity data publications version 1.0, Copenhagen: Global Biodiversity Information Facility, 40p, ISBN: 87‐92020‐49‐6.

Mesibov R (2013) A specialist’s audit of aggregated occurrence records. ZooKeys 293: 1-18. doi: 10.3897/zookeys.293.5111

Otegui J, Ariño AH, Encinas MA, Pando F (2013) Assessing the Primary Data Hosted by the Spanish Node of the Global Biodiversity Information Facility (GBIF). PLoS ONE 8(1):